Simplicity in a Moment of Creation

Embedding a copilot inside the DigitalOcean database create flow, where users were already going

The business asked for fewer clicks. The actual ask was harder. Setting up a managed database on DigitalOcean is a real act of provisioning. You pick an engine, a version, a region, a plan, a storage size, an availability tier, a name, a project. Each of those is a real decision with real cost and real performance consequences. Reducing the click count without reducing the choices was the wrong frame.

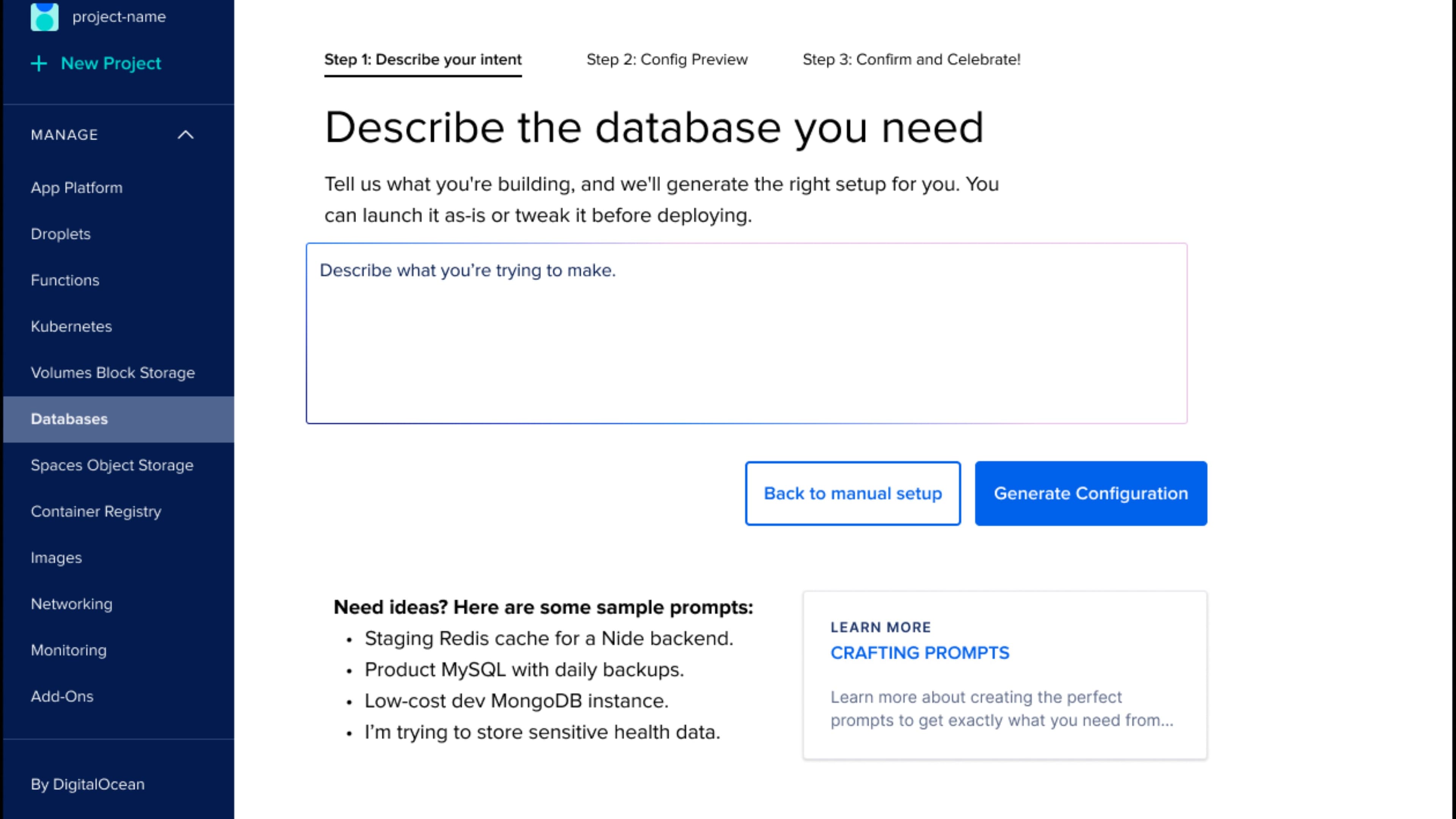

So we turned to the user and said: just tell us what kind of database you need. We'll configure it for you. No forms, no guesswork, just smart defaults and fast deployment.

Easy to say. The work was making it real without sanding off the legitimate complexity underneath.

Where to put it

Inside the create flow. Not in a chatbot.

The first design decision was where the copilot should live. The default move in 2025 was to put AI in a chat panel and call it done. We chose a different path: the copilot lives inside the existing database create flow. When you click Create Database, the first thing you see is a prompt input on the left and a manual configuration column on the right, side by side. Type your intent. Click manual setup. Both work the same way the rest of the product does, and neither one feels like a side door.

There's no inherent value in changing the user's expected action just because it's easier for us. Users come to the create flow to make a database. We meet them there. The copilot is the new way to drive that flow, sitting next to the old way, and choosing between them is one click.

The assumption that conversational UI is the right frame for everything is something the industry imported wholesale from ChatGPT. It's a poor fit for production software. A database create flow has a known shape: a finite set of decisions, an outcome the user wants to verify before pressing the button, and consequences if anything is wrong. A configuration form is the right surface for that work, and the copilot's job is to fill in that form on the user's behalf and let them check the work.

Embedded means the AI shows up where users are already going. The user doesn't have to learn a new surface; the copilot writes the form and waits.

That positioning is the entire reason this case study exists. The same pattern is now being applied to Kubernetes setup and Droplet provisioning. It will keep working as long as we keep meeting users in the moment of creation.

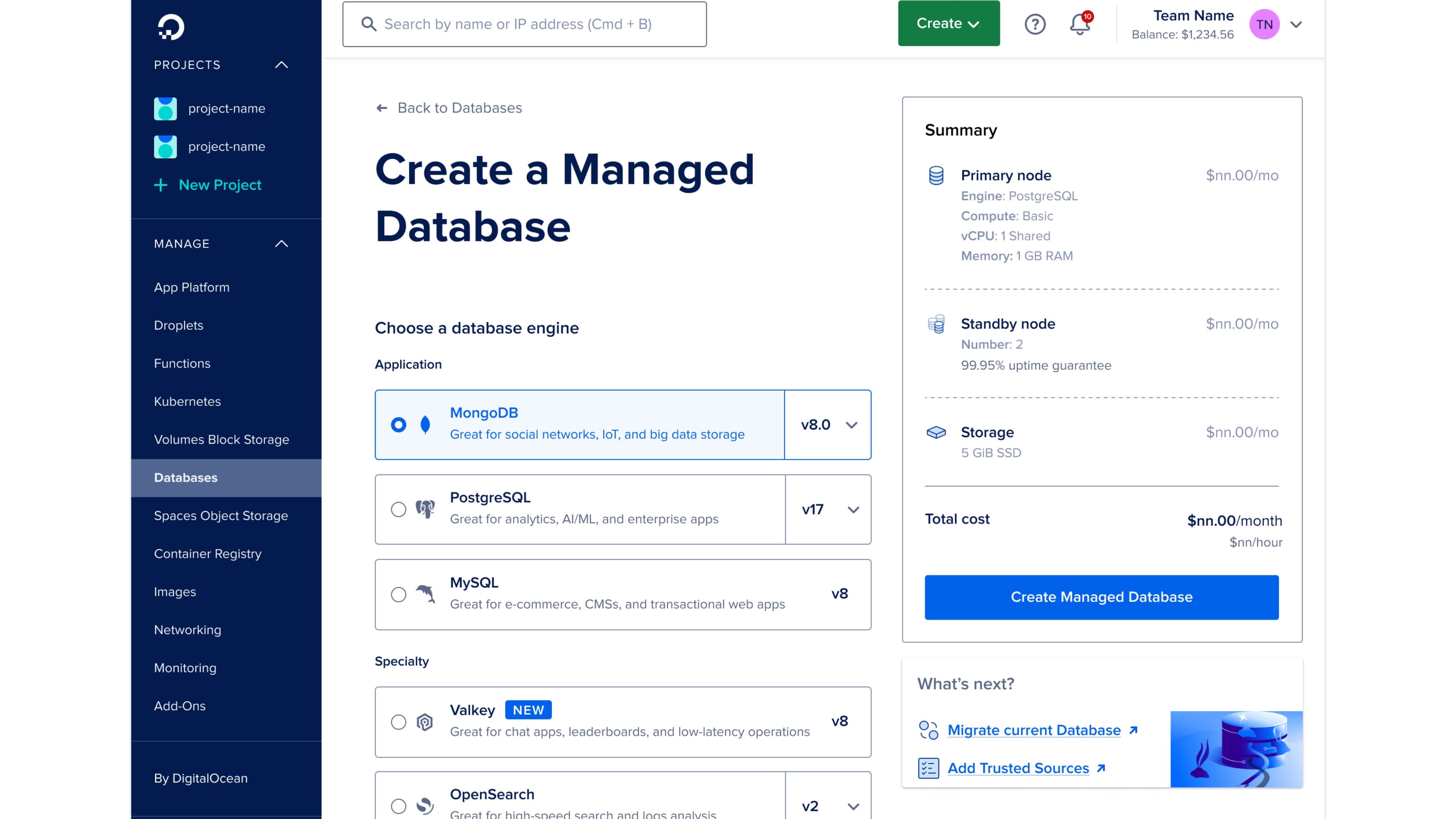

The starting point

What manual setup actually looked like.

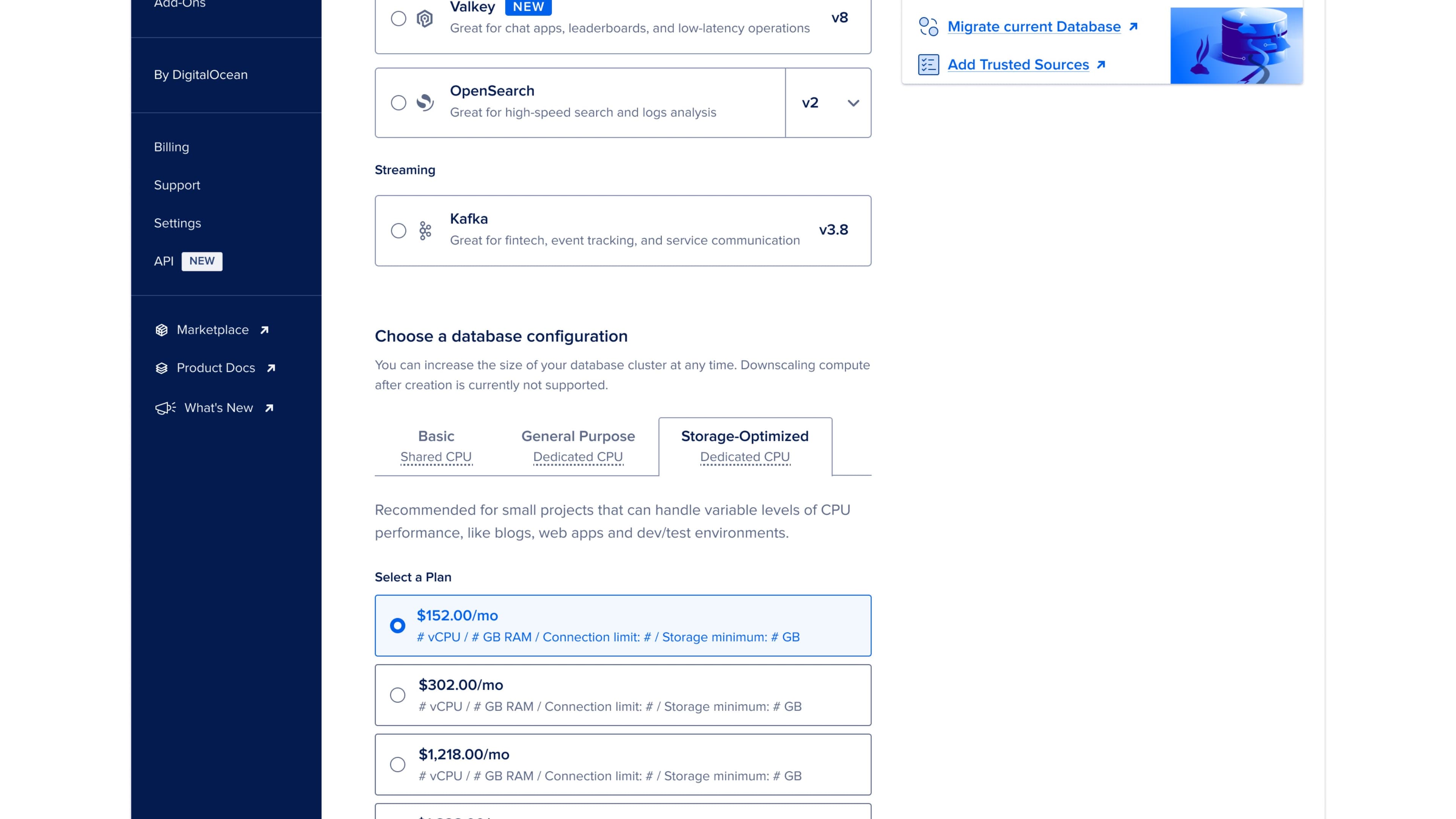

Before we redesigned anything, I made the team walk through the manual create flow with fresh eyes. Engine picker. Plan tiers running into the thousands of dollars a month and storage values in figures that mean something only if you already know what they mean. Standby node options behind a recommendation badge. Datacenter region. VPC network. Project. Name. The whole thing took twelve fields and a comfort with cloud vocabulary that most of our newer customers don't have. Every field is necessary. Every field is also a chance for the user to bail.

The redesign

Three steps. Plain language. Editable everything.

The new flow is three steps and reads like a sentence. Describe what you want. Review what we configured. Confirm and create.

Step one is a single text input that asks the user to describe their intent. We pre-load four sample prompts to lower the cold-start tax: a staging Redis cache, a production MySQL with backups, a low-cost dev MongoDB, a sensitive-health-data scenario. The samples are aspirational on purpose. They show the user that the copilot understands shape and constraints, well beyond keyword matching.

The user types. The system parses. Step two opens up the result.

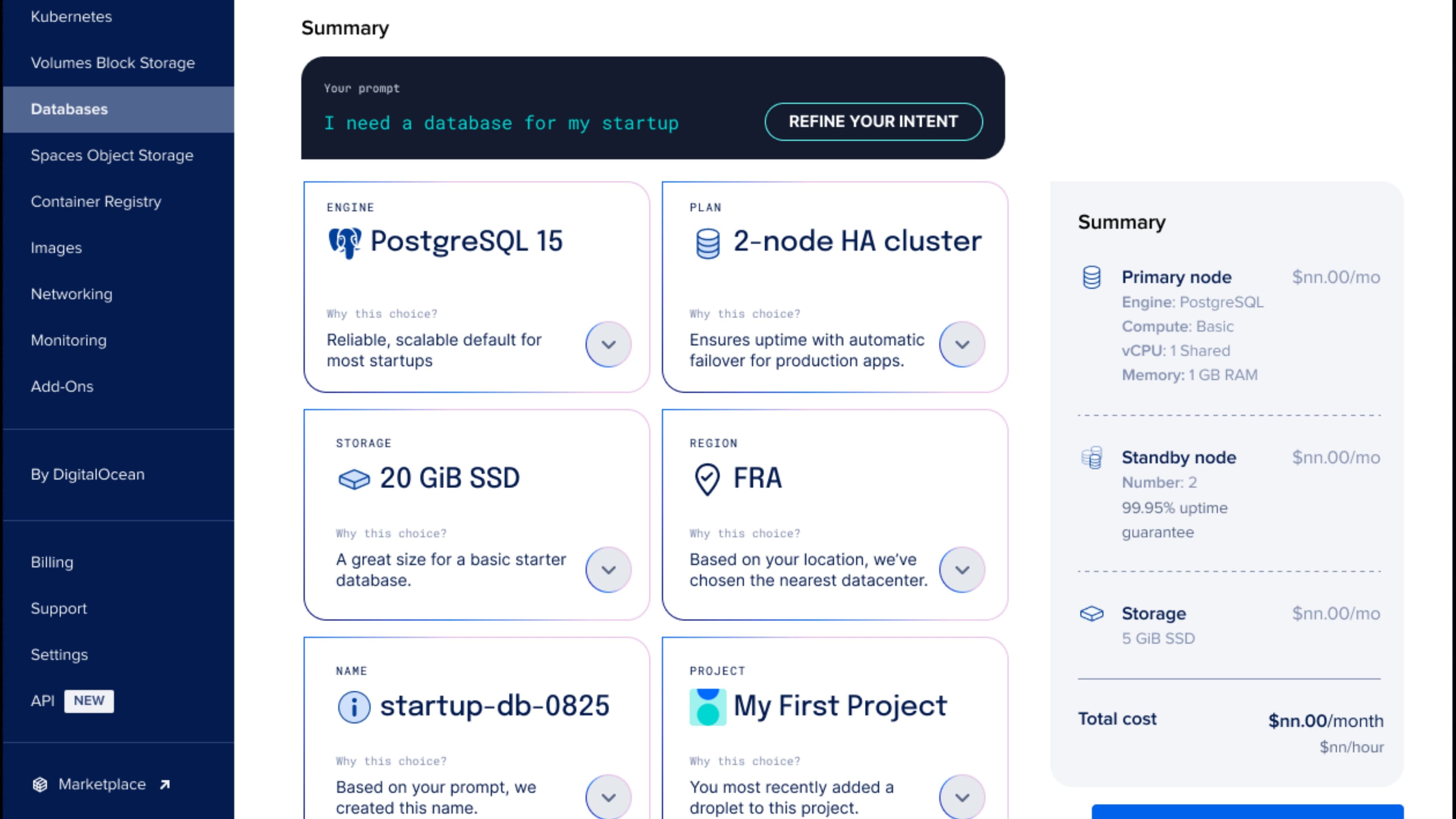

Step two is six summary cards laid out in a grid, one per decision. Engine, plan, storage, region, name, project. Each card has the choice the copilot made and a “Why this choice” affordance that opens a short explanation grounded in the user's prompt. PostgreSQL because the prompt mentioned analytics. Two-node HA cluster because production was implied. The Frankfurt region because the user's IP suggested EU traffic. The user can accept any card or override it inline, no round-trip.

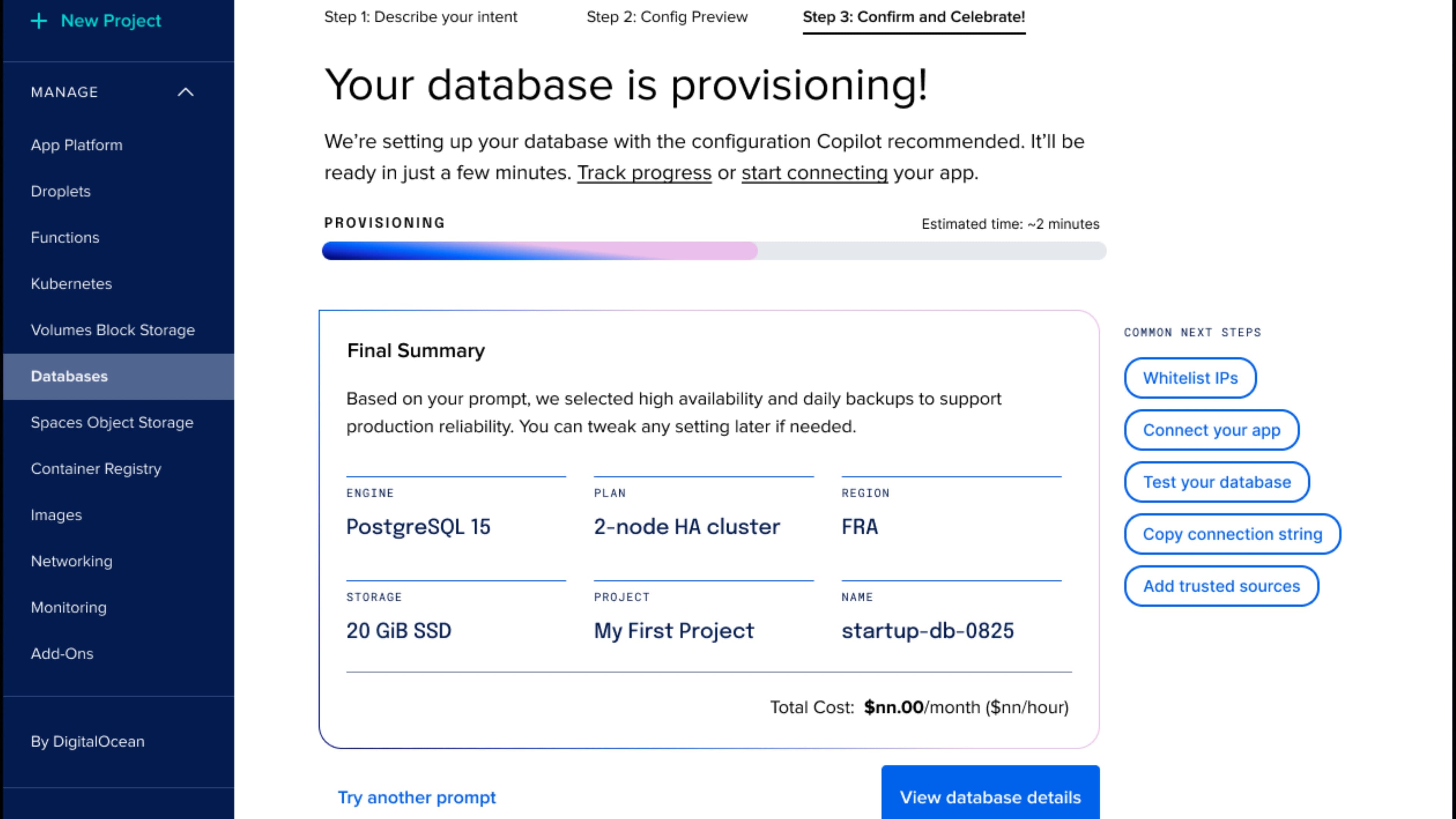

Step three is confirmation and creation. The provisioning screen shows a progress bar with a realistic estimate, plus a final summary the user can screenshot if they need to share it. The next-steps card on the right gives them the four things they're most likely to want immediately: whitelist IPs, connect their app, test, copy connection string.

The whole flow takes about thirty seconds for a confident user. For a less confident user, the “Why this choice” affordances turn the flow into a guided tutorial. For an advanced user who wants to override everything, the manual flow is one click away from any step.

The hard parts

Tone and control.

The two design problems that took the most iteration were tone and control. Tone is what the AI sounds like in its rationale copy. We landed on confident, brief, and explicit about what was inferred from the prompt. No hedging. No apologizing. No “based on your input it seems like.” The user typed a sentence; the system either understood it or didn't. Show the work either way.

Control is harder. The user has to feel like they could intervene at any point, even when the copilot got everything right and they're about to click Create. We solved this with three patterns: inline editing on every summary card, a one-click rerun prompt at the top of step two for users who want to try again with a different sentence, and a one-click manual override at any time.

Inline edit was the biggest win. Each card is a real input, with the copilot's choice pre-filled. Click engine, change to MySQL, the rest of the configuration recalculates instantly because the copilot also knows the implications of that change. The user is never locked into the AI's choice. They're handed a working draft and given the pen.

Rerun is for the cases where the prompt was the wrong shape. The user types “low-cost dev MongoDB instance,” sees the configuration, realizes they actually need backups, types again with that constraint added. Two seconds, full reconfiguration. The history of past prompts stays visible so the user can compare.

Manual override is the safety valve. At any step the user can drop down to the original twelve-field flow and configure by hand. The copilot's choices come along as starting values. Nothing is lost.

Together these three patterns give the user a real handle on the AI's work. The copilot is doing the heavy lifting; the user is still in charge.

What it proved

AI doesn't have to take over the screen.

This is one of the first non-chat copilots DigitalOcean shipped. It's the proof point that AI can be useful without commandeering the surface. An embedded assistant inside the create flow. Visible where the user is already working. Quiet where they don't need help.

The pattern transferred. Kubernetes setup is being redesigned with the same three-step structure. Droplet create is next in the queue. Each new flow takes less design time because the trust criteria, the inline-edit pattern, the “Why this choice” affordance, and the manual fallback are already shared assets.

What I'm proudest of is the philosophical shift. The team's default question stopped being “should we add AI” and became “where in the flow does AI carry weight without getting in the way.” A much better question, and it produces much better software.

The copilot is doing real work. The user is still the one who clicked Create. We aimed for that on purpose.

The business asked for fewer clicks. We gave them a flow where the user types one sentence and gets a working database two minutes later, with every choice editable and every choice explained. The right kind of work, in the right place, at the right time.

Why

- DigitalOcean

- Cloud and Developer Tools

- AI

- Design Leadership

- Product Strategy

- Interaction Design

- Product Design

- UX Design

- Wireframing

- AI Experience Design

- AJ Zichella (Design)

- Soyun Park (Design)

- Kevin Carrillo (Engineering)