The DigitalOcean Copilot Journey

From a docs widget to a copilot platform: a year of building AI that users actually trusted

The story begins with a small widget on the documentation site. It was a helpful proof of concept: an AI assistant that could answer questions using our technical docs. Useful enough that users found it. Limited enough that the team kept asking what it could become.

What it could become turned out to be much bigger. We reimagined the experience as a copilot embedded in the product itself, scaled across surfaces, and eventually made it a platform other companies could use. That progression took about a year and a half of design work, and at every step the core question stayed the same: how do you build AI that users trust enough to actually rely on?

The framework I held the team to was four words. The copilot had to be embedded, explainable, editable, and trustworthy. Each one of those words shaped a different part of the design.

Cloud infrastructure is genuinely complicated, and pretending otherwise has never helped a user. The work was making AI carry some of that complication instead of adding to it.

The criteria

Embedded. Explainable. Editable. Trustworthy.

Four words on a slide that the team came back to every week. Embedded means the assistant lives where the work happens, never as a destination of its own. Explainable means every answer shows its sources and reasoning. Editable means every output can be corrected, refined, or rejected by the user. Trustworthy is the outcome the other three produce: when AI is in your product, the user only keeps using it if they know what it knows and what it doesn't.

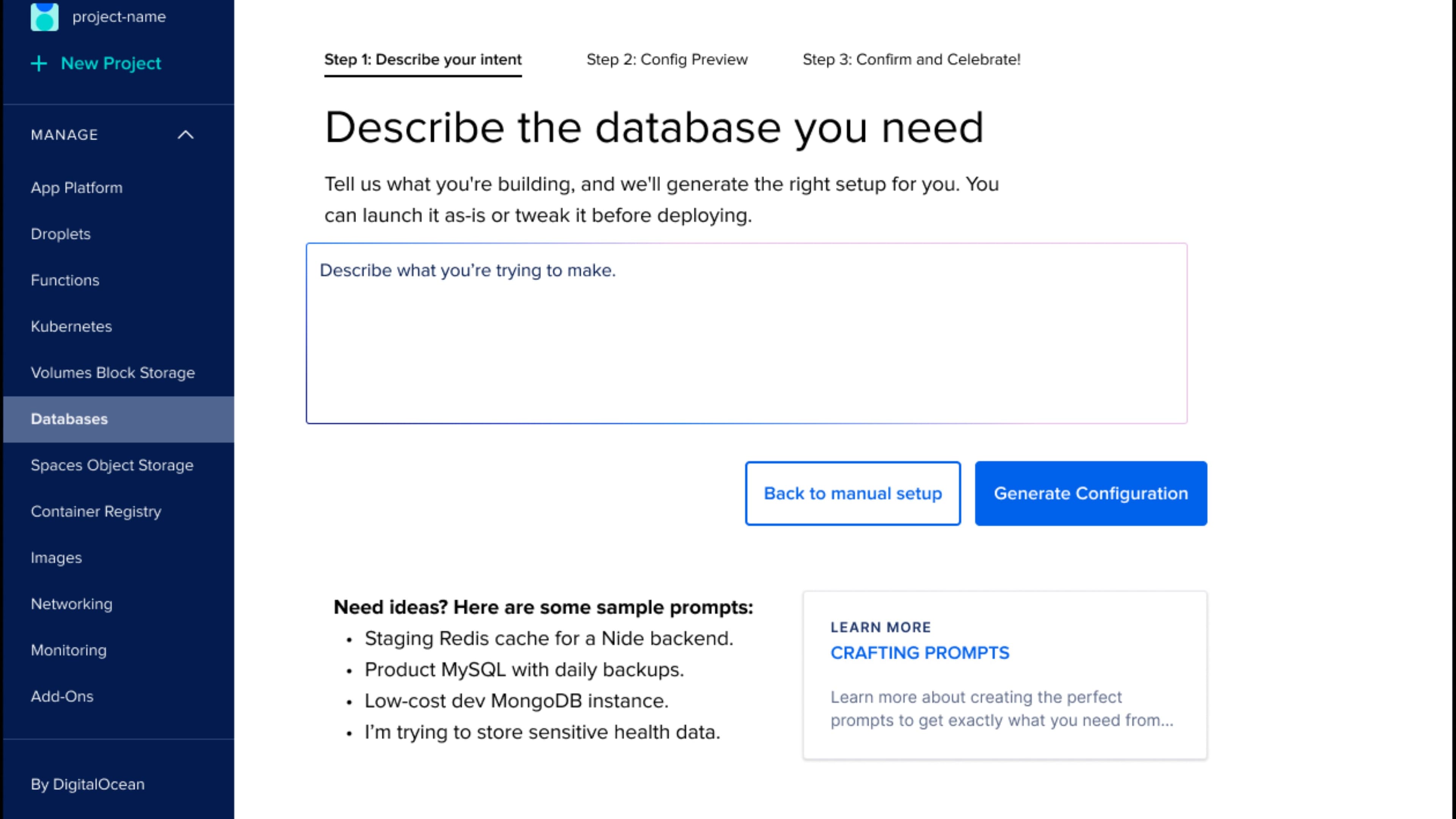

Where it started

A widget on the documentation site.

The first version was a small, contained surface. A user opened the docs site, asked a question in plain language, and got an answer with citations back to the documentation that produced it. Quiet, focused, low-stakes. It was the right place to start because it set the bar: every response had to be traceable to a real source. No hallucinations. No invented commands. No fabricated flag names. The grounding work had to be visibly correct before we earned the right to put AI anywhere else in the product.

The leap

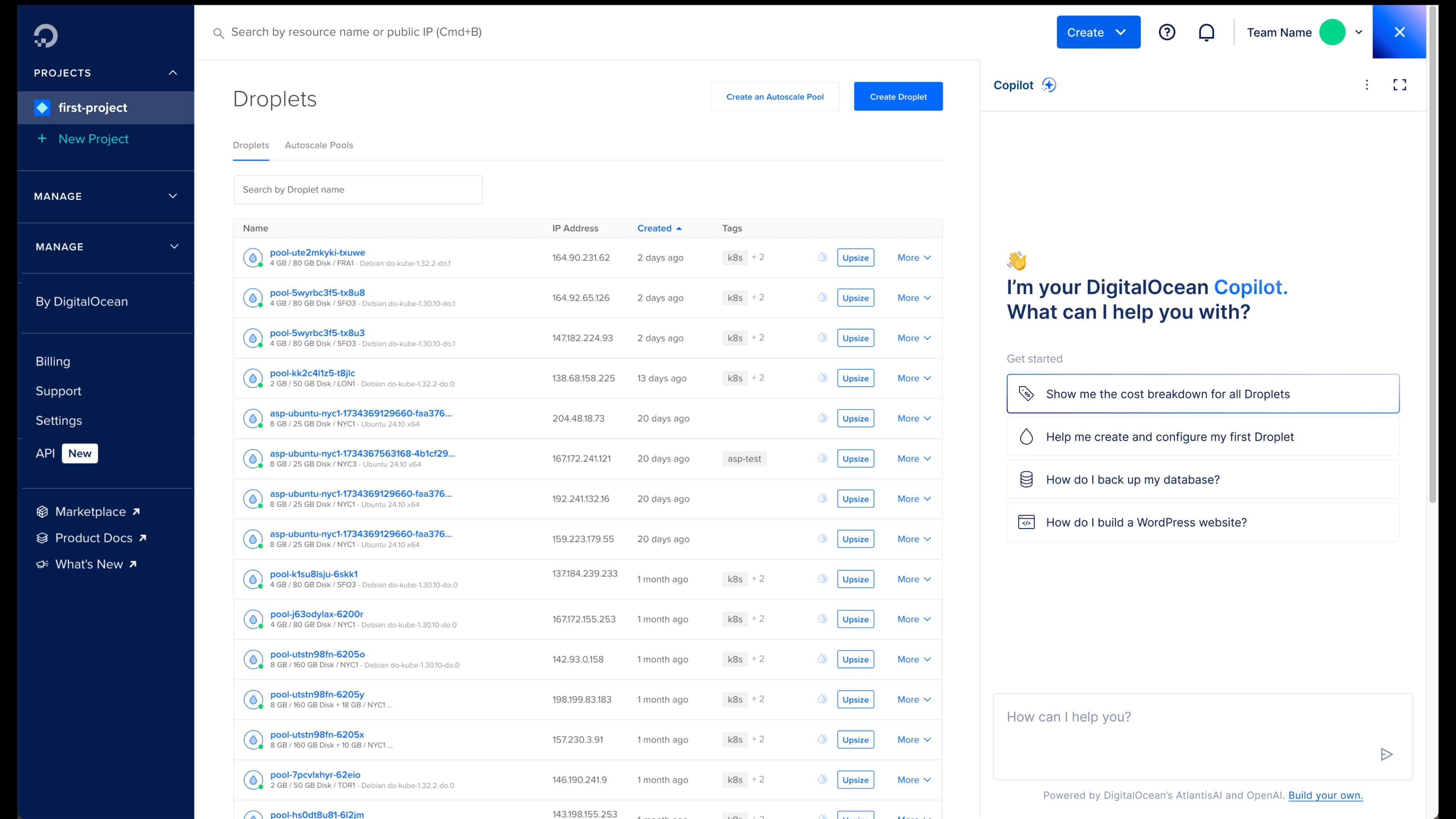

From a chatbot to a UX layer.

The leap was deciding the docs widget couldn't stay in the docs. The team had been treating the assistant as a chatbot. I pushed for a different framing: the assistant is a UX layer, available wherever a user needs help, capable of acting on their account when it's appropriate, never required when it isn't. That reframe unlocked the rest of the work. We stopped designing one feature and started designing a presence that could show up across the product without taking it over.

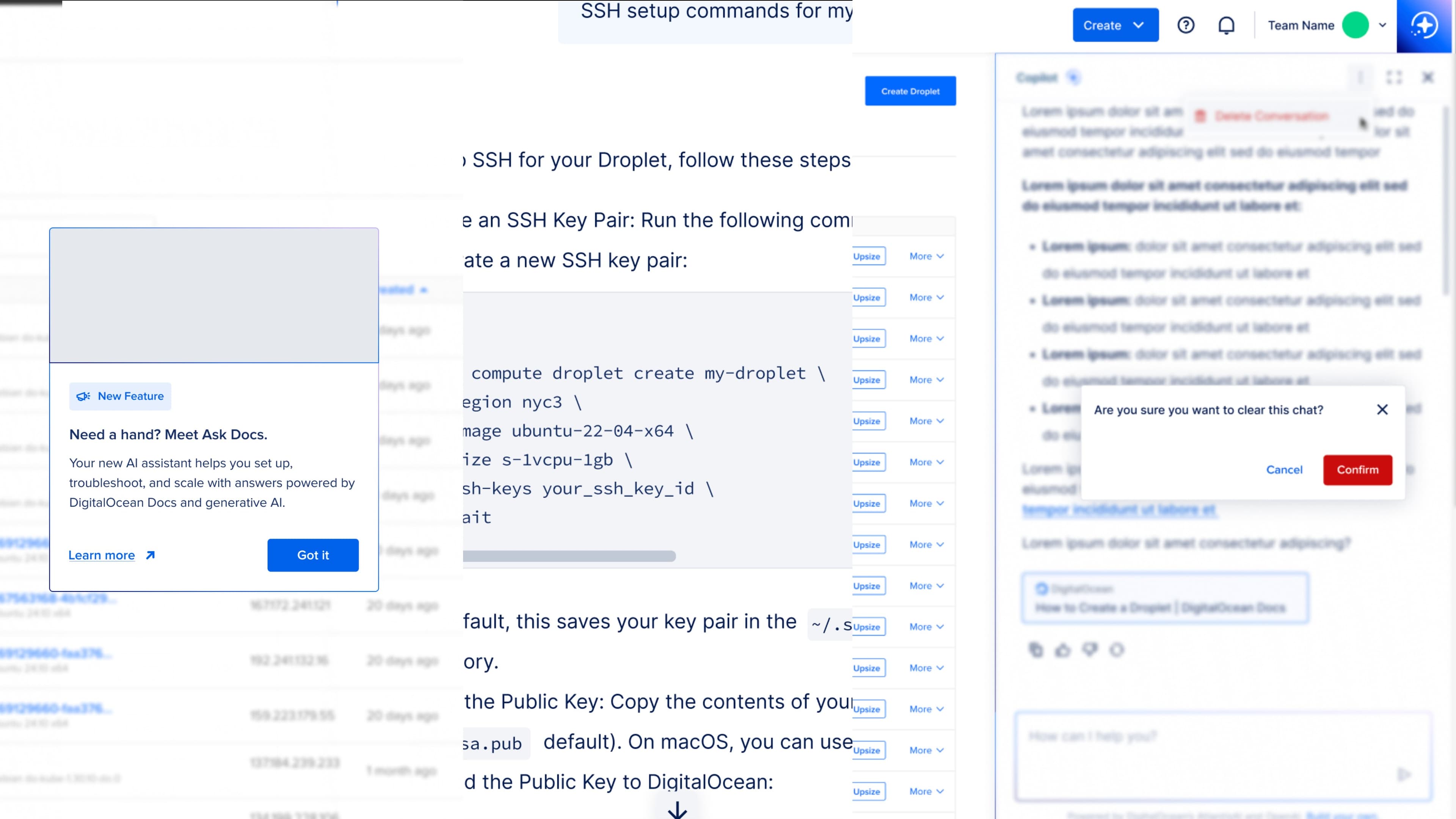

The embedded version lives in the control panel as a docked panel that the user can summon and dismiss. It knows what page you're on. It knows what resources you have. It knows your role. It can explain a chart on the billing page, walk you through creating your first Droplet, or generate a config file you copy into your terminal.

Critically, it doesn't try to replace the surfaces that already work. Droplets list is still Droplets list. Billing is still billing. The copilot adds capacity; it doesn't redirect the user away from where they were going.

We also built three persistent affordances around it: a context indicator showing what the copilot can see, a suggested-prompts row to lower the cold-start tax, and a persistent “cite my sources” toggle that keeps grounding visible by default. Trust is built in small moves like these. Marketing can't substitute.

The result is an assistant that behaves like a colleague who has read every doc and watched the user work. It's helpful when called on, quiet when not.

The product

Then we shipped it to everyone else.

Strategic partners started asking how they could build something similar with their own documentation. We had a usable kernel of work, a real product surface, and a thesis about embedded AI that other companies wanted to apply. So we productized it. The customer-facing version, DocsBot, lets a company create their own documentation copilot with their branding, their analytics, and their training. The hardest part of designing it was getting the onboarding right, because the user we were now designing for might never have trained an AI before.

The industry assumption I kept hearing was that “regular” users couldn't train a bot. I disagree. Most users are AI-curious and willing to try, as long as they have guidance. So one of my contributions was the Golden Dataset onboarding pattern. Users define their ideal responses through real examples: tone, accuracy, the answers they'd give if a customer asked them directly. The bot trains against those examples instead of generic web content, and the result is a copilot that sounds like the company that built it.

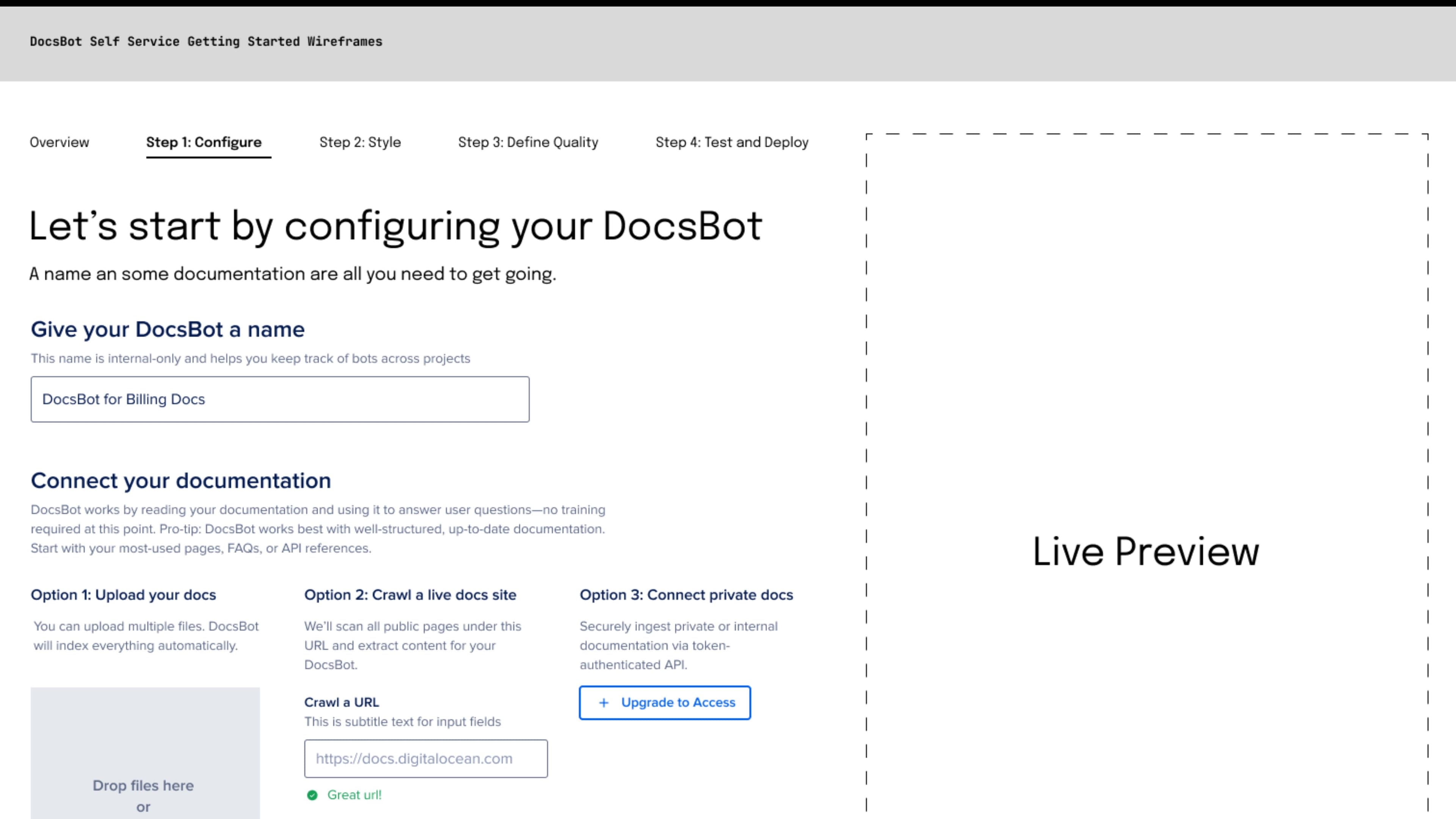

The configure step is small on purpose. Three ways to connect documentation. One brand decision. One name field. The user makes a few clear choices and moves on.

The style step is where the bot starts looking like the company. Colors, voice, logo, suggested first prompts. Live preview is required here. Every choice the user makes updates the preview immediately, so the gap between configuration and outcome stays closed.

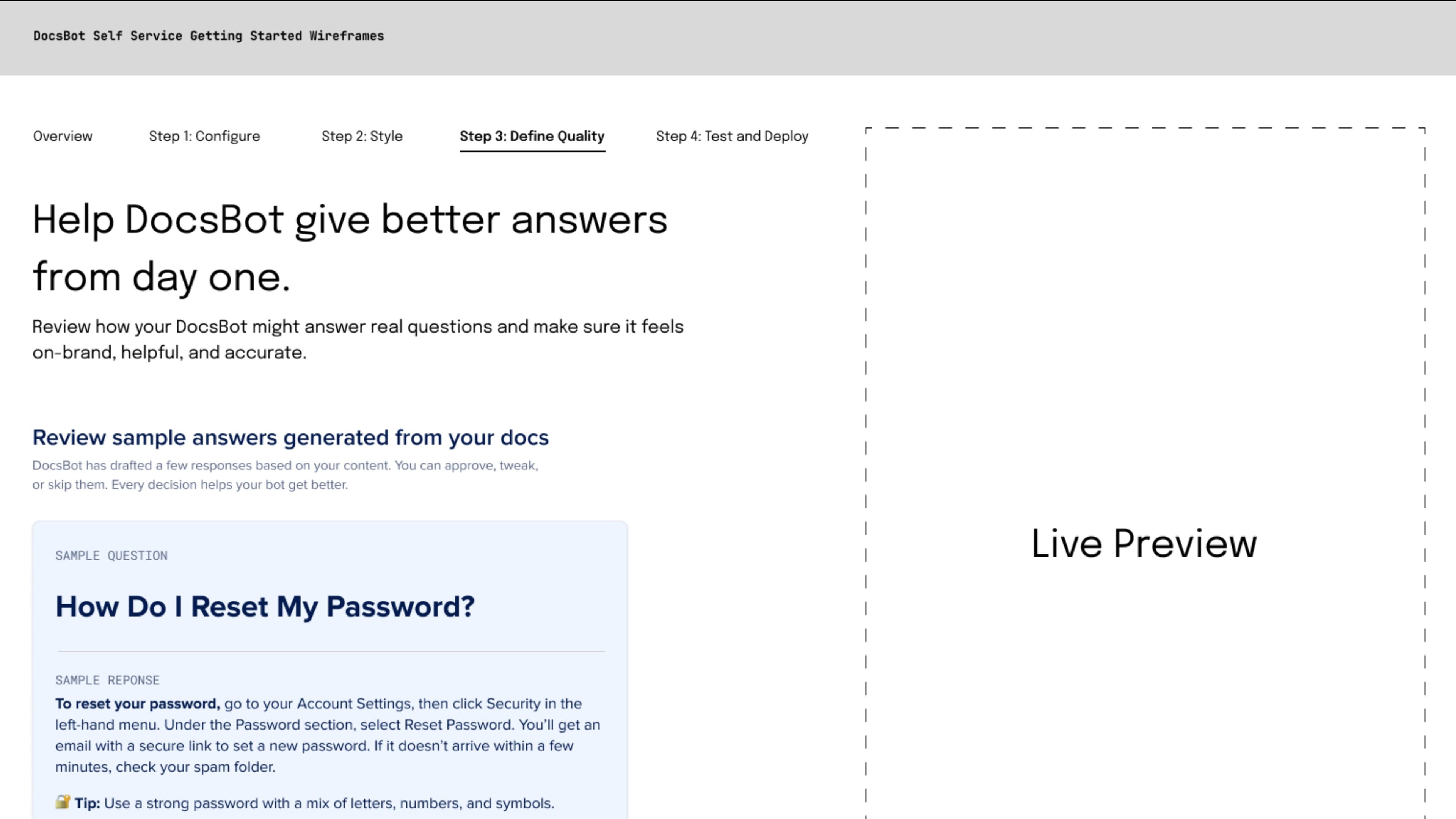

The training step is where the Golden Dataset comes in. The user reviews sample answers, approves the ones that sound right, edits the ones that don't, and skips the ones they want to come back to. Each review teaches the bot something specific about how the company speaks. After about thirty examples, the tone is consistent enough that customers can't tell the difference between the bot and a person.

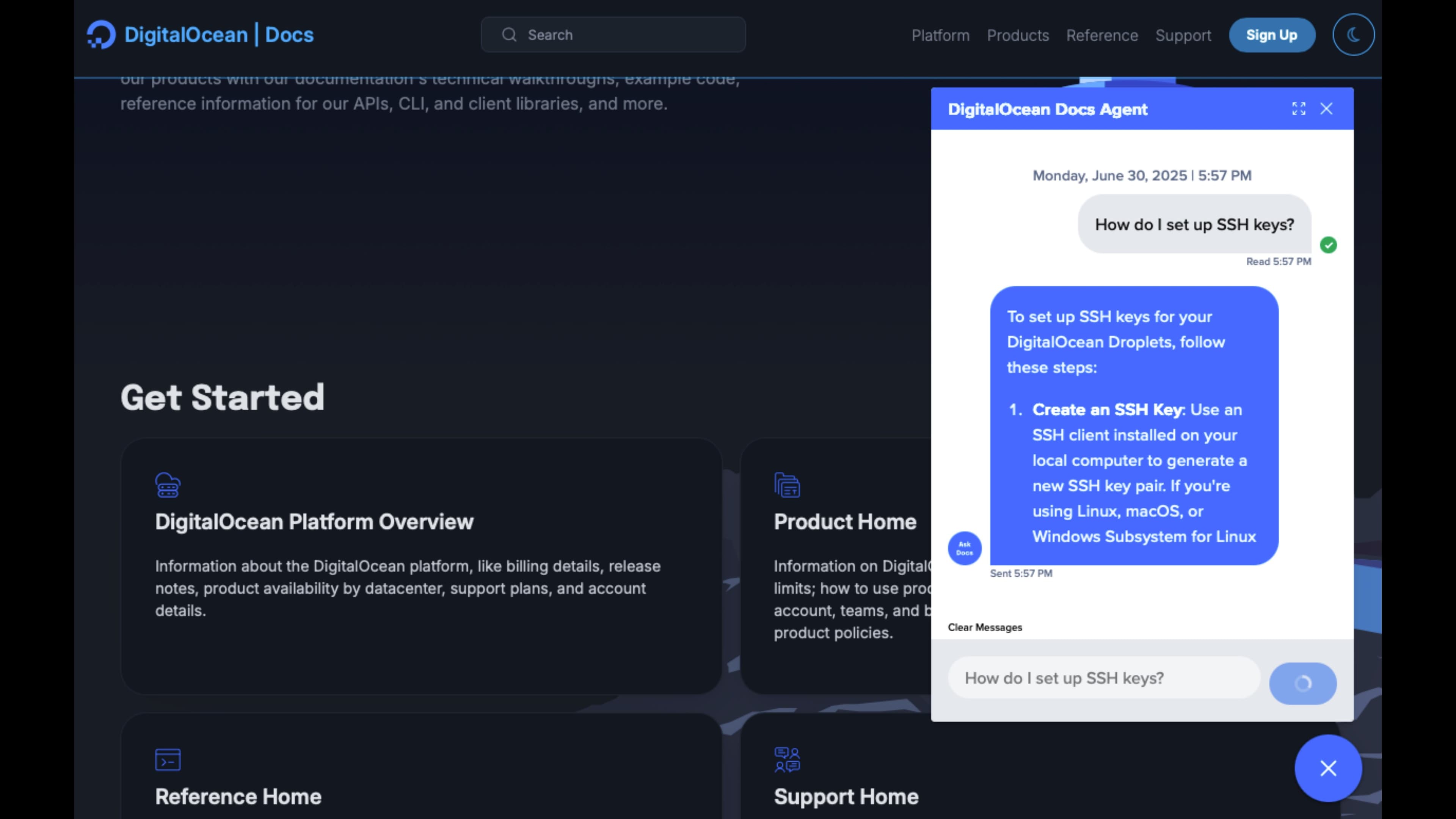

What it became

A platform, not an endpoint.

The work that started as a docs widget became something more useful: a platform that product managers, designers, and engineers across DigitalOcean now build on top of. The Database Create Copilot was the first feature to use it. The next round of new copilot surfaces came faster, because the trust criteria, the design patterns, and the engineering scaffolding were already in place. The team didn't have to relitigate what good AI design looked like every time someone wanted to add it to a flow.

What I'm proudest of is the cultural shift inside the design organization. AI stopped being a special-case conversation and became a normal design surface. We use the same critique vocabulary for it. We hold it to the same trust standards. We test for the same emotional outcomes. That mainstreaming is what lets a team ship AI features at production quality without needing a separate “AI team” bottleneck.

The four words on the slide are still on the wall. They're how the team explains the work to new hires, to executives, to the customers using DocsBot, and to themselves. Embedded, explainable, editable, trustworthy. Those words mean something now, because they describe how the product actually behaves.

If you're building anything with AI in it, those four are worth borrowing.

The next surface is already in flight. The platform is doing its job.

The first time a customer told me the copilot felt like “a real coworker who actually read the docs,” I knew the criteria had carried us through. Embedded is location. Explainable is sources. Editable is humility. Trustworthy is the gift the user gives back when the first three are real.

Why

- DigitalOcean

- Cloud and Developer Tools

- AI

- Design Leadership

- Product Strategy

- AI Experience Design

- Product Design

- UX Design

- Design Systems

- Wireframing

- AJ Zichella (Design)

- Soyun Park (Design)

- Isabel Shic (Design)

- Kevin Carrillo (Engineering)